What Humans Are Actually For

A framework for human value in an age of artificial intelligence

Most conversations about humans and AI are asking the wrong question.

They ask what AI can do. They catalogue what it will automate, what roles it will reshape, what industries it will disrupt. This is understandable. The pace of change is real, the stakes are high, and it is natural to look at a powerful new force and ask what it is capable of.

But the more important question, and the one organisations and individuals are less prepared to answer, is this: what are humans actually for?

Not in a philosophical sense. In a practical one. As AI absorbs more of the procedural, the analytical, the within-domain, what remains is not the leftovers. What remains is the work that was always hard. The work that requires something AI can approximate but cannot replicate. The work that organisations have long been quietly relying on people to carry, often without naming it, measuring it, or designing for it.

This piece is an attempt to name it.

It is also an attempt to offer a more complete picture than the standard skills list. Because the conversation about human capability in an AI context tends to skip a prior question: what conditions have to be in place before any capability is even accessible? What does a person need to be, before we ask what they need to know?

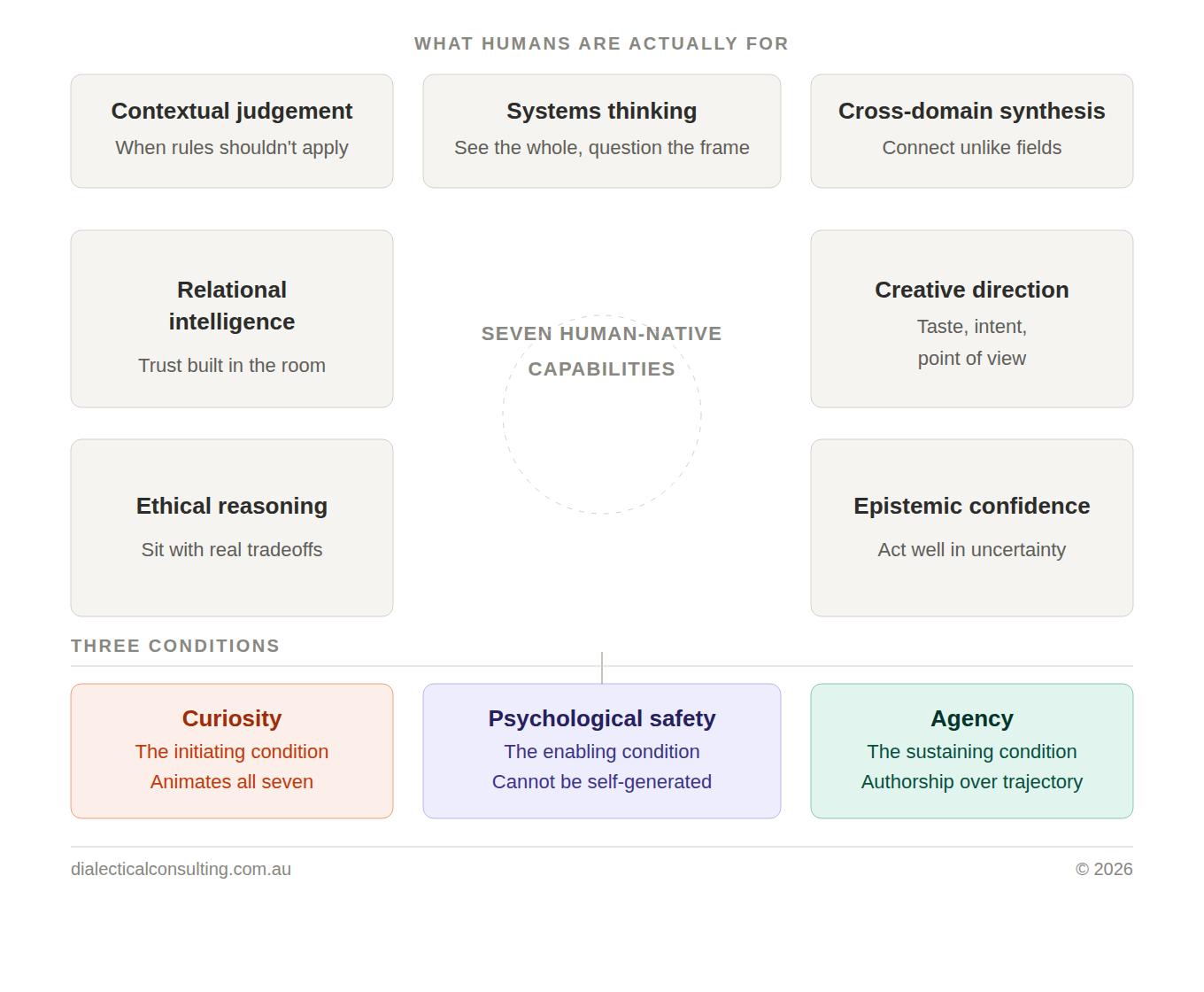

The framework I want to offer here has two parts. Three conditions. Seven human-native capabilities. Neither part works without the other.

THE AUGMENTATION ARGUMENT, AND ITS LIMITS

The dominant narrative in responsible AI discourse is augmentation. AI as a tool that extends human capability, handles the repetitive, frees people up for higher-order work. This narrative is not wrong. There is genuine evidence that well-designed human-AI collaboration outperforms either alone in a range of domains.

But the augmentation argument has a quiet assumption buried inside it: that humans know what their higher-order work is. That organisations have clarity on where human judgement, creativity, and relational intelligence are genuinely irreplaceable, and that they have designed for those things deliberately.

In most cases, they have not.

The dynamic work design research I have written about previously shows that organisations consistently misrecognise where their real capability sits. Judgement, coordination, ethical reasoning, and institutional memory are routinely invisible in formal role definitions. They live in people, not in job descriptions. They are relied upon heavily, and named almost never.

AI does not arrive into this situation and clarify it. It complicates it. When AI begins handling more of what was previously visible in a role, what remains is precisely this invisible layer. And if organisations have not named it, measured it, or designed for it, they are unlikely to protect it.

The augmentation argument works well when organisations already understand what humans contribute distinctively. Most do not. Which is why the more urgent task is not choosing the right AI tools. It is getting clear on what humans are actually for.

That is what this framework attempts to answer. Before the argument, the architecture.

BEFORE CAPABILITY: THE THREE CONDITIONS

There is a tendency, when discussing future skills, to jump straight to a list. What should people learn? What competencies will matter? What should education and development focus on?

These are reasonable questions, but they rest on an assumption that deserves to be examined: that capability, once identified, is accessible. That if we name the right skills, people will develop and deploy them.

In practice, three conditions determine whether capability develops and whether it is exercised at all. They are not skills. They are the ground from which skills grow. Remove any one of them, and the capabilities that follow become theoretical rather than real.

Curiosity: The initiating condition

Not as a personality trait, but as a survival mechanism. The people who are navigating AI disruption most effectively tend not to be the most credentialled or the most experienced. They are the ones who find the disruption itself interesting. Who pick up new tools, poke at them, figure out what they can and cannot do, and adjust their practice accordingly. Curiosity is what keeps a person in motion when the ground is shifting. It is also a compounding asset. Every capability in this framework deepens over time if curiosity is present. Systems thinking gets richer. Cross-domain synthesis gets more surprising. Contextual judgement becomes more nuanced. Without curiosity animating them, these capabilities can calcify. A person can have strong relational intelligence and stop developing it. Curiosity is what keeps the rest alive.

Psychological Safety: The enabling condition

This is the one condition that cannot be fully self-generated. Curiosity is internal. Psychological safety is where the individual meets the environment. People can be genuinely curious and still not act on it, because the culture around them signals that experimentation is risky, that not-knowing is a weakness, that being wrong is a threat to standing. In an AI context this is particularly acute. Many people are curious about these tools but carry real anxiety about being seen to not understand them, or about what their engagement with them implies for their own identity and value. That anxiety suppresses the curiosity before it becomes action. Psychological safety is not a soft cultural nicety. It is a structural prerequisite for the capabilities that follow. Organisations that want their people to develop distinctly human capability in an AI age need to ask honestly whether they have created the conditions for that. The responsibility for this sits with leadership and culture, not with individuals alone.

Agency: The sustaining condition

A felt sense that one's actions matter, that the trajectory of one's working life is not simply happening to them. People who have lost agency, who feel passive in relation to the changes moving through their industry or organisation, tend not to invest in developing capability even when they are curious and feel safe enough to try. Why build something if you do not believe you can direct where it goes? Agency is about authorship. It is the difference between watching AI reshape the workplace and actively deciding how to position yourself within that reshaping. It cannot be mandated, but it can be cultivated, and the conditions that support it are partly organisational and partly internal.

Curiosity initiates. Psychological safety enables. Agency sustains. Together, they create the ground from which genuinely human capability can grow.

SEVEN HUMAN-NATIVE CAPABILITIES

The following capabilities are not a skills wishlist. They are the areas where human cognition, experience, and judgement are genuinely difficult to replicate, and where that difficulty is not a temporary condition waiting for the next model release. They are also the areas where, as AI takes on more, the human contribution becomes more visible and more consequential, not less.

They are offered here not as a development checklist but as a framework for thinking about what is worth protecting, investing in, and designing for in the organisations and careers we are building.

Contextual judgement

AI can apply rules with extraordinary consistency and speed. What it struggles with is knowing when the rules should not apply. Contextual judgement is the capacity to read a situation accurately enough to make a call that departs from the expected response, and to do so in a way that is defensible and well-reasoned. It is not intuition in the loose sense. It is calibrated, experience-informed recognition that this situation is different, and that difference matters. In an AI-augmented environment, this becomes more critical rather than less. As AI handles more of the rule-following, humans are increasingly responsible for the exceptions, the edge cases, and the moments where the right move requires breaking or bending the frame entirely.

Systems thinking

AI is good at optimising within systems. It is considerably weaker at questioning the system itself. Systems thinking is the ability to see how parts connect, where leverage actually sits, and what second and third-order effects a decision is likely to produce. It is also the capacity to notice when the frame is wrong, when the problem being solved is not the problem that matters, when an organisation is efficiently pursuing the wrong objective. This is not an abstract skill. It is what separates people who can see an organisation clearly from those who can only see their corner of it. In an environment where AI is increasingly making local optimisation decisions, the humans who can see the whole become disproportionately valuable.

Cross-domain synthesis

The most generative thinking tends to happen at the edges of fields, where a concept from one domain turns out to illuminate something in another. Cross-domain synthesis is the ability to recognise these connections and do something useful with them. It depends on a particular kind of intellectual life: wide reading, genuine curiosity about fields outside one's own, and a habit of asking where have I seen this shape before when encountering a new problem. AI can surface cross-domain associations, particularly when prompted. What it cannot replicate is the kind of synthesis that emerges from lived, embodied, contextual experience across different domains over time. The connection that comes from having actually worked in two different industries, or from a decade of reading outside your field, has a different quality to a retrieved association. It is grounded in a way that pattern matching is not.

Relational intelligence

Reading people accurately, adapting to them in real time, building trust over time, navigating conflict without destroying the relationship. AI can simulate this in text with increasing sophistication. It cannot do it in the room. And most things that actually matter, decisions, commitments, culture, the resolution of difficult tensions, still get decided in the room. Relational intelligence is not charisma or social ease. It is the capacity to understand what another person needs from an interaction, what they are not saying, what they are afraid of, and to respond to that reality rather than the surface version. In organisations navigating significant change, this becomes the capability that determines whether transformation lands or stalls.

Ethical reasoning

As AI takes on more consequential work, the humans in the loop need to be genuinely equipped for ethical reasoning, not just nominally responsible for it. Ethical reasoning is not compliance. It is not applying a policy or running a decision through an ethics framework. It is the willingness to sit with genuine tradeoffs, where legitimate values are in tension and there is no clean answer, and to make a defensible call. It requires clarity about what you actually value, the intellectual honesty to acknowledge uncertainty, and the courage to act anyway. The organisations that will navigate AI integration most responsibly will be those with people who can do this well. The ones that will struggle are those that have mistaken governance for judgement.

Creative direction

Generative AI is prolifically creative in the technical sense. It produces. What it cannot do is want something. It does not have taste in any meaningful sense, does not know when something is off, cannot make a deliberate aesthetic or strategic choice from a genuine point of view. Creative direction, the ability to know what is worth making, to recognise quality, to push toward something specific rather than something merely competent, remains deeply human. This is not about artistic talent. It applies equally to product strategy, to organisational design, to the shape of a communication. The person who can look at a dozen AI-generated outputs and know which one is right, and why, and what needs to change in the others, is exercising something that the tools themselves cannot provide.

Epistemic confidence

In a world of abundant information, much of it contested, the ability to hold uncertainty well becomes a distinctive capability. Epistemic confidence is not certainty. It is the opposite of two failure modes that are both common: false certainty, which closes down inquiry and mistakes confidence for understanding, and epistemic paralysis, which mistakes uncertainty for an absence of grounds for action. The person with genuine epistemic confidence knows what they know, knows what they do not know, and can act decisively in the gap without pretending the gap does not exist. In an AI context, this matters in a specific way. AI produces outputs with a tone of authority that can be misleading. The people who can interrogate those outputs, who can distinguish between where the model is likely to be reliable and where it is likely to be confidently wrong, are exercising epistemic confidence in a form that is genuinely new and genuinely valuable.

WHAT THIS MEANS IN PRACTICE

This is not a framework for individual heroism. The conditions and capabilities described here do not develop in isolation. They develop in organisations that have designed for them, cultures that have made space for them, and working lives that have had the texture and variety to build them.

Which means the practical implications run in two directions simultaneously.

For individuals, the question is where on this map your development has been invested, and where it has been quietly neglected. Most people have depth in one or two of these capabilities and have not thought deliberately about the others. The more interesting question is which ones have atrophied, and why. Often the answer involves one of the three conditions: curiosity that has narrowed over time, psychological safety that has been eroded by a particular environment, or agency that has been surrendered in the face of institutions or systems that felt too large to influence.

For organisations, the question is more structural. It is whether the work design, the culture, and the leadership actually support the development of these capabilities, or whether they inadvertently suppress them. An organisation that rewards rule-following over contextual judgement, that punishes visible uncertainty, that has no tolerance for cross-domain thinking because it looks unfocused, is an organisation that is systematically degrading the very capabilities it will need most as AI takes over the rest.

The irony of the current moment is that many organisations are investing heavily in AI capability while quietly consuming the human capability that would allow them to use it well.

THE RIGHT QUESTION

The question that matters is not whether humans can compete with AI on AI's terms. That contest is settled and the answer is not encouraging.

The question is whether humans, organisations, and the systems we build together can get clear on what humans are distinctively for, and whether we can design working lives and institutions that actually develop and protect those things.

That requires curiosity about the change that is already underway. It requires environments where people feel safe enough to engage rather than avoid. And it requires a felt sense of agency, an understanding that this is not something happening to us, but something we are, slowly and imperfectly, helping to shape.

The capabilities that follow from those conditions are not soft. They are the hard problems that AI consistently cannot resolve alone. They are also, perhaps not coincidentally, the things that make work feel like it is worth doing.

That seems like a reasonable place to start.

If this framework resonates and you'd like to explore what it means for your organisation, reach out via info@dialecticalconsulting.com.au or contact me via linkedIn.